If your IIS website is like thousands of others, it generates hundreds of megabytes (or gigabytes) of IIS log files every day.

“Big data”, amIrite?

The web is filled with tools to help you store, archive and manage your IIS log files … and even more tools to view, parse, query and analyze them.

But, you are probably not doing any of those things.

And why should you? Despite the supposed treasure trove of data in the logs, it’s not entirely clear how it actually helps you do your job:

- What questions should you answer using the logs? There is little purpose in monitoring IIS logs unless you can derive some insight you will act on.

- When your website is having performance issues, like hangs, slowdowns, queueing, or errors, how do you use the logs to figure out where the issues are & then actually FIX them?

Many IT/DevOps folks look to the IIS logs for answers to performance issues. This is usually because by the time they are looking, the logs are the only record of the issue in their IIS monitoring toolkit that they have access to.

The cold reality of this is: IIS logs DO NOT contain enough information to troubleshoot most performance issues. As a result, it’s too easy to go down the rabbit hole of trying to find “log patterns” that explain the issue, like a DOS attack, an unusual pattern of requests, etc. This is usually a case of hammer syndrome, as in “to a hammer everything looks like a nail”.

No, I am not saying that the logs are not valuable. They can be: as long as you can ask the right questions, and know what questions not to bother asking.

In the course of working with thousands of production IIS sites over the last 10 years at LeanSentry, we’ve taken a very precise and pragmatic approach to the role of IIS logs in tuning and troubleshooting.

In this guide, we’ll:

- Identify critical questions we like to answer using IIS logs, when tuning/troubleshooting an IIS website.

- We’ll also review which common questions must be answered using other ways.

- Finally, we’ll share a set of enhancements that take your IIS log insights to the next level, and provide a lightweight library you can use to instantly gain those enhancements for your website. For more on this, you can jump to part 2: Enhance your IIS logs.

Let’s get into it!

How I would proactively fix your IIS website using your logs

If you asked me to help tune up your website, I am going to start by asking a few key questions to determine your website’s “quality of service”.

Then, I am going to systematically drill down into that “quality of service” measurement to identify where specifically it gets hurt. Do you have slow or failing requests to specific URLs? Are there specific incidents where your website stopped working normally?

Finally, I am going to identify the specific issues causing the drops, and work on diagnosing each issue to get it fixed.

I make it sound simple, right?

While the art of diagnosing performance problems is not exactly simple, the process can be. It starts with systematically asking very simple questions that you can answer, you guessed it, using IIS logs!

Also, let’s get the basics out of the way:

- For this guide, I am using Microsoft LogParser 2.2 to query the logs, with results shown using the Log Parser Lizard GUI tool.

- Examples will use IIS logs located in the c:\inetpub\logs\LogFiles\... directories.

- This guide works for IIS 7.0 logs and above, although we’ll need IIS 8.5 logging features to add the extra IIS log fields we need.

- I am assuming you are using the standard per-site W3C logging format to begin with. For part 2, we will be switching to the built-in IIS 8.5+ Enhanced logging (not IIS Advanced logging and not Custom logging).

Without further ado, let’s get into how you can use said logs to answer those questions for your site.

Question 1: Is my IIS site providing a good quality of service?

I like to answer this question with a single, simple metric we at LeanSentry call “Satisfaction score”. It’s roughly equivalent to the Apdex standard used by many other APM tools.

I blogged about this score way back in 2013 when we first released LeanSentry, and 30K websites later, it’s still the main IIS metric we monitor today.

(Check out the blog post for the rationale as to why it beats “avg. response time” or “95th percentile latency” approaches when it comes to whole-site quality of service monitoring.)

The satisfaction score is a simple percentage. If your site has a 100% satisfaction score, that means that your workload is 100% successful and meets the target response time SLA. If you have slow or failed requests, they will penalize your score.

| Metric | Formula | Definitions |

| Satisfaction score | SAT Score = (FAST requests - FAILED requests - SLOW requests - (SLUGGISH requests / 2)) / TOTAL requests |

TOTAL: all requests

SLUGGISH: requests completed between 1x-4x the SLA (e.g. 2-8 seconds) SLOW: requests completed >4x the SLA (e.g. 8+ seconds) FAILED: requests that fail with an error. |

A great property of this score is that it is both not skewed by outliers, AND it considers ALL requests unlike an average or statistical aggregate metric.

In other words:

- A single request taking 2 hours to complete will not skew your score any more than a single request that takes 10s (if both are considered too long per your SLA).

- If 2% of your requests are taking too long, in the absence of other issues, you will roughly have a 98% score. These 2% of slow requests or failures will NOT be hidden from your view even if your avg. latency or 95% percentile response time is excellent.

So, I will first measure your satisfaction score to know (a) is your site providing a good service level overall and (b) did it have specific incidents where the service level dropped? We can then dig into service level drops to identify opportunities to improve your site.

Computing the satisfaction score for your IIS website

A comment on my original blog post noted that while this score seems appealing, it’s hard to implement because of lack of tools to report this metric.

Indeed, building this reporting was a big part of LeanSentry’s approach to monitoring IIS websites.

However, it turns out that it is very possible to compute a basic satisfaction score using your existing IIS logs!

(And with the enhancements we give further in this guide, it’s possible to get the score to a fully workable level!)

So with that in mind, let’s compute your website’s satisfaction score using your IIS logs:

-- Select components of satisfaction score select COUNT(*) AS TOTALREQUESTS, SUM(CASE DIV(sc-status,399) WHEN 0 THEN 0 ELSE 1 END) AS FAILEDREQUESTS, SUM(CASE DIV(time-taken,2000) WHEN 1 THEN 1 WHEN 2 THEN 1 WHEN 3 THEN 1 ELSE 0 END) AS SLUGGISHREQUESTS, SUM(CASE DIV(time-taken,8000) WHEN 0 THEN 0 ELSE 1 END) AS SLOWREQUESTS, -- SAT score: ROUND(DIV(TO_REAL(MUL(SUB(SUB(SUB(TOTALREQUESTS, FAILEDREQUESTS), SLOWREQUESTS), DIV(SLUGGISHREQUESTS,2)),100)), TO_REAL(TOTALREQUESTS))) AS SATSCORE FROM C:\inetpub\logs\LogFiles\W3SVC2\*.log

Logparser queries can be tricky

If you are confused by the SQL query to compute the sat score, just take my word for it ... the log parser support for functions is pretty sparse so we'll just have to use what we can, with heavy reliance on the PROPCOUNT and PROPSUM functions. At the same time, it's amazing that we can do this at all. Big thanks to Gabriele for making LogParser such a capable tool, used by tens of thousands of IIS pros worldwide.

This uses the SLA time of 2 seconds. We’ll look at how to customize the SLA times for different URLs and requests at the end in enhance your IIS logs.

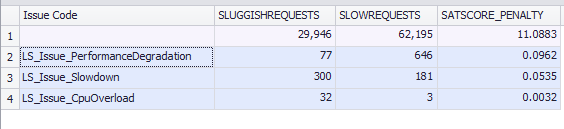

Example results:

Using this query, I can immediately tell that our site has a lower-than-desired SAT score of 82%, which means that roughly 18% of the traffic was not successful. That definitely needs work.

In particular, I know that the bulk of this problem comes from failed requests, so I can fix this by identifying the errors and fixing them (we’ll get into that shortly). I also see that we have some slow and sluggish requests, and we’ll look at breaking those down as well.

A note on the Time-Taken field

No discussion of IIS logs is complete without noting that the default time-taken field that we are using to determine response times includes the network time - the time to send the response to the client. In other words, the IIS log time taken field tracks TTLB (time to last byte).

As a result, clients on slow or mobile connections may log very long response times, even though your application generated the response quickly. This may tag fast requests as slow and lower your satisfaction score.

We’ll correct this in part 2 by logging the “Application processing time” as an alternative to time-taken, so you can switch to it for the purposes of Satisfaction score calculations.

Question 2: Where are the issues?

Now that I have a general sense of the problem (overall sat score is fairly low), I want to localize it: figure out where in the site I am losing requests so I begin to identify the specific issues I can fix.

To do this, let’s break down our satisfaction score by URL:

-- Break down satisfaction by url

select

cs-uri-stem AS URL,

-- I can also group related urls by parent path, e.g. extract_path(cs-uri-stem) AS URL

-- Sat score components:

COUNT(*) AS TOTALREQUESTS,

SUM(CASE DIV(sc-status,399) WHEN 0 THEN 0 ELSE 1 END) AS FAILEDREQUESTS,

SUM(CASE DIV(time-taken,2000) WHEN 1 THEN 1 WHEN 2 THEN 1 WHEN 3 THEN 1 ELSE 0 END) AS SLUGGISHREQUESTS,

SUM(CASE DIV(time-taken,8000) WHEN 0 THEN 0 ELSE 1 END) AS SLOWREQUESTS,

-- SAT score:

ROUND(DIV(TO_REAL(MUL(SUB(SUB(SUB(TOTALREQUESTS, FAILEDREQUESTS), SLOWREQUESTS), DIV(SLUGGISHREQUESTS,2)),100)), TO_REAL(TOTALREQUESTS))) AS SATSCORE

FROM C:\inetpub\logs\LogFiles\W3SVC2\*.log

GROUP BY URL

ORDER BY TOTALREQUESTS DESC

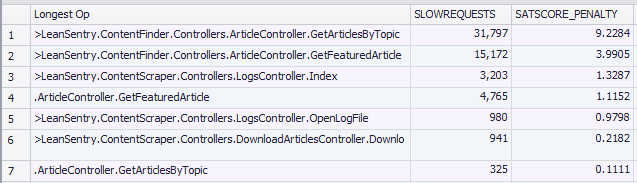

Now I can see which URLs experienced lower satisfaction and why:

This is useful to see what caused satisfaction drops for each URL. For example, the /Preload/GetSlides url had a fairly low satisfaction score of 64%, and again mostly because of errors. This is definitely something I need to fix, as this url is responsible for providing help content to LeanSentry users and I also don’t expect this url to ever fail.

However, what I probably really want here is a breakdown of which URLs caused the net satisfaction loss to my site’s satisfaction score as a whole, which is a bit different from their individual satisfaction scores. I want their satisfaction score “penalty” contribution.

Let’s get it:

-- Break down satisfaction penalty to the website by url select cs-uri-stem AS URL, -- Sat score components: COUNT(*) AS TOTALREQUESTS, SUM(CASE DIV(sc-status,399) WHEN 0 THEN 0 ELSE 1 END) AS FAILEDREQUESTS, SUM(CASE DIV(time-taken,2000) WHEN 1 THEN 1 WHEN 2 THEN 1 WHEN 3 THEN 1 ELSE 0 END) AS SLUGGISHREQUESTS, SUM(CASE DIV(time-taken,8000) WHEN 0 THEN 0 ELSE 1 END) AS SLOWREQUESTS, -- SAT score: ROUND(DIV(TO_REAL(MUL(SUB(SUB(SUB(TOTALREQUESTS, FAILEDREQUESTS), SLOWREQUESTS), DIV(SLUGGISHREQUESTS,2)),100)), TO_REAL(TOTALREQUESTS))) AS SATSCORE, -- SAT score penalty (to the site): MUL(PROPCOUNT(*), SUB(100.0, DIV(TO_REAL(MUL(SUB(SUB(SUB(TOTALREQUESTS, FAILEDREQUESTS), SLOWREQUESTS), DIV(SLUGGISHREQUESTS,2)),100)), TO_REAL(TOTALREQUESTS)))) AS SATSCORE_PENALTY FROM C:\inetpub\logs\LogFiles\W3SVC2\*.log GROUP BY URL ORDER BY SATSCORE_PENALTY DESC

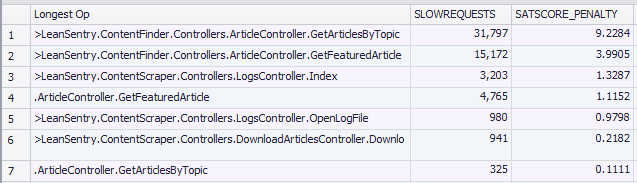

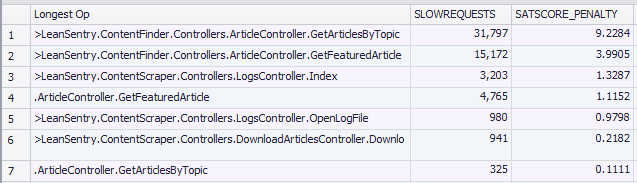

Here it is:

I can now see that the bulk of my site’s satisfaction score is lost due to the /Dashboard/Track url, specifically due to failed requests on that url.

This is now specific enough for me to investigate and address. I will do this by inspecting the errors causing failed requests on this URL.

Additionally, I can make a short list of other issues causing the highest satisfaction score impact and repeat this process to address them.

Next step, figuring out the cause of each problem we identified.

Question 3: What is causing the issues?

At this point, it’s worth specifically defining the “issues” we are monitoring for in the IIS logs. It’s simple, there are only two things:

- Failed requests (caused by errors).

- Slow/sluggish requests (caused by hangs, application slowdowns, CPU or memory resource exhaustion, queueing, threading issues, etc)

Our satisfaction score queries thus far have clearly identified which URLs have slow and failed requests, and whether slow or failed requests are the bulk of the issue.

However, we are about to hit some IIS logging limitations that prevent us from getting root causes of performance problems. IIS logs were never meant to have the answers to WHY performance issues happen, only whether they do.

In fact, more data is almost always needed to determine the root causes of errors and slow requests. This is exactly why we built LeanSentry, to aggregate all the necessary data sources to detect specific issues, AND to analyze the runtime state of the application to diagnose their root causes. To do this, we monitor IIS logs as a starting point, and then perform Just-In-Time diagnostics using a dozen of other data sources to help us get to the bottom of each issue when it happens.

But say you don’t have LeanSentry. Let’s see how we can, using IIS logs only, get the most insight into what causes slow and failed requests.

In the second part of this guide, we’ll significantly enhance that ability by adding specific custom fields to your IIS logs using our free, lightweight ApplicationMonitoring.dll library.

What is causing slow requests?

(In part 2, we’ll enrich your IIS logs to tell you the longest application code action that caused slow requests. For details see enhance your IIS logs.)

This is a hard question to answer without additional data.

To begin with, just using the logs, we can make a high probably guess to help classify the problems we are having as either “hangs” or “slow requests”:

- Hangs: specific incidents where a large percentage of requests across multiple URLs were slow, typically caused by an application “lockup”, excessive concurrency, or server overload due to CPU or memory.

- Slow application code: a lower, but ongoing number of slower requests on specific URLs, typically due to longer-running portions of your application code (like SQL queries or remote web service calls).

A simple way to determine this is by looking at the number and percentage of slow requests in the site over time. You may say, “yeah but how do you know that”. I know that because that’s the pattern we’ve established across ~30K IIS websites we’ve diagnosed with LeanSentry over the last 10 years. Barring a few exceptions, this is just how that cookie usually crumbles.

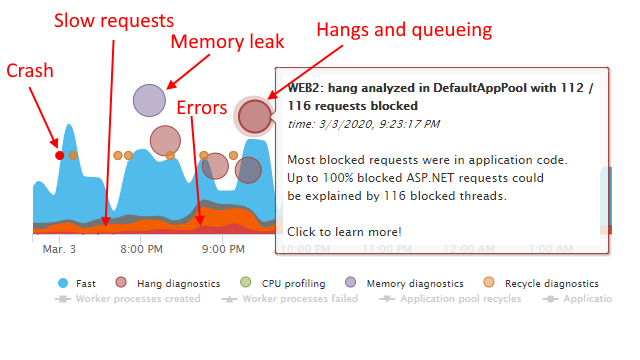

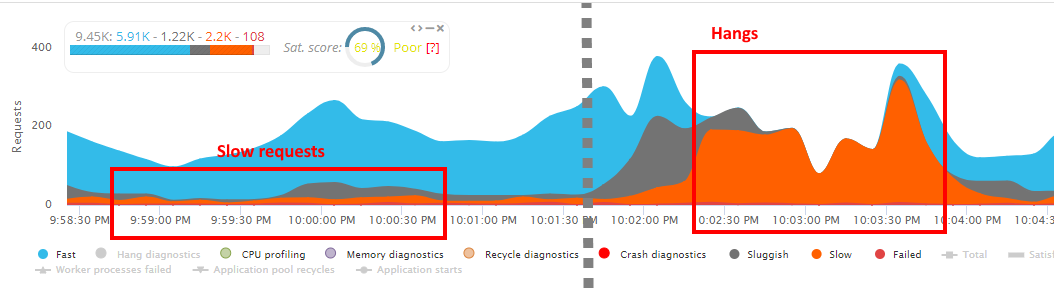

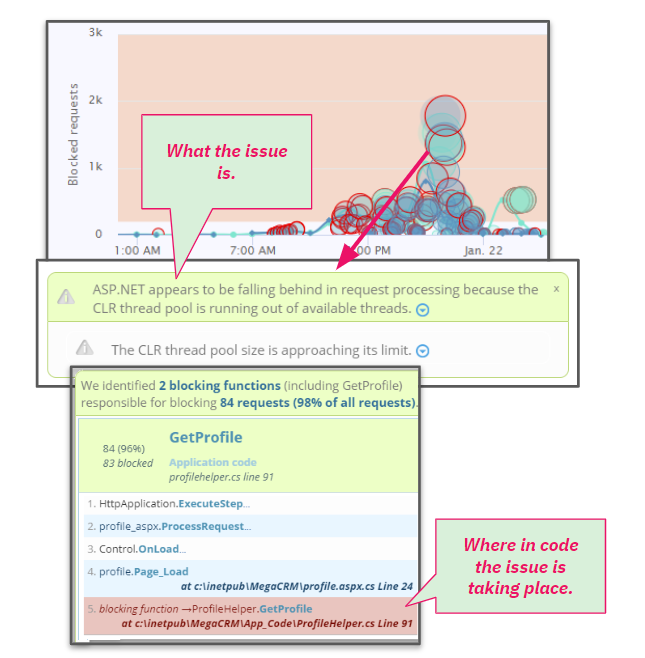

This phenomenon becomes very clear when viewing the request health using LeanSentry’s traffic visualization:

The reason for this is actually simple. If your site is experiencing an application-level hang or server-level overload, it’s going to eventually affect most or all of the requests. For example, once enough request threads are blocked, and the thread pool becomes exhausted, all new requests will begin to wait for threads, resulting in a slowdown for 100% of your incoming traffic.

The % of slow requests during these types of incidents will be substantially higher than normal. Otherwise, you are going to have occasional slow requests (a fairly stable % for each URL) while others will be fast.

Here is how to scan for hangs using your IIS logs and logparser:

-- Select components of satisfaction score over time (1 minute periods) select -- Time period TO_LOCALTIME(QUANTIZE(TO_TIMEST&(date, time), 60)) AS TimePeriod, -- Sat score components: COUNT(*) AS TOTALREQUESTS, SUM(CASE DIV(time-taken,8000) WHEN 0 THEN 0 ELSE 1 END) AS SLOWREQUESTS, MUL(100.0, PROPSUM(CASE DIV(time-taken,8000) WHEN 0 THEN 0 ELSE 1 END)) AS SLOWREQUEST_PERCENT, -- SAT score: ROUND(DIV(TO_REAL(MUL(SUB(SUB(SUB(TOTALREQUESTS, FAILEDREQUESTS), SLOWREQUESTS), DIV(SLUGGISHREQUESTS,2)),100)), TO_REAL(TOTALREQUESTS))) AS SATSCORE FROM C:\inetpub\logs\LogFiles\W3SVC2\*.log GROUP BY TimePeriod

This produces a time series that you can plot, filtering to any incidents with 2x the typical slow request percentage from the overall time period.

Enhancing your IIS logs with causes of slow requests

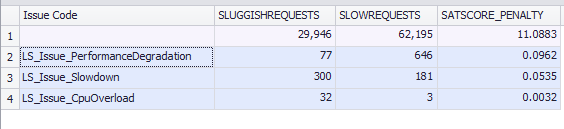

In the second part of this guide, I’ll show you how to step up your slow request game quite a bit. You’ll be able to tag your slow requests in the IIS log with the issue causing the app-wide slowdown:

Your slow requests that were affected by CPU overload on the server will get tagged with the LS_Issue_CpuOverload issue code, so you’ll be able to link the CPU incident with the associated slow requests.

The second thing we’ll be able to log is the application code that contributed the most execution time to the slow requests. This will rely on trackers that you can add to your code using LeanSentry.ApplicationMonitoring.dll.

Head over to Enhance your IIS logs section for the step by step.

Diagnosing causes of hangs

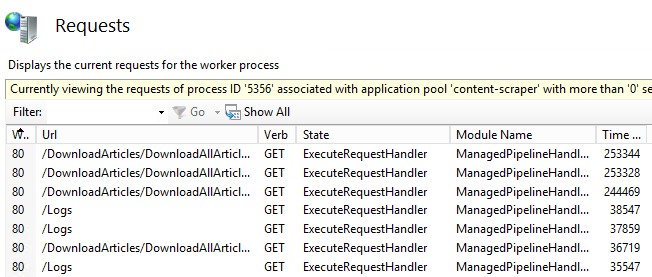

Did you find hangs? Hangs are occasional incidents during which many requests pile up and appear blocked. A hallmark of hangs is a sharp increase in active requests, and many requests taking more than normal amount of time. LeanSentry determines this using the IIS Runtime State and Control API, similar to appcmd list requests or viewing active requests in INETMGR.

Hangs can be caused by many different factors, including:

- Application deadlocks. All requests in the application are blocked in the code, due to taking a lock. This can only be identified by attaching a debugger at the moment of the hang (which LeanSentry does when diagnosing hangs).

- Thread pool exhaustion or thread pool scaling issues. All threads in the application are blocked, and the thread pool is not able to provide sufficient threads to process new requests, causing them to become queued. Or, the threadpool cannot provide enough threads to enable completions of async tasks, resulting in all requests becoming blocked waiting for threads. This can only be identified in a debugger, and is part of LeanSentry’s hang diagnostics.

- Slow application code. The requests may be blocked by slow data retrieval, e.g. slow SQL queries, or slow responses from Redis/cloud-based databases/remote web services. Again, this is typically only identifiable with a code-profling APM tool or a debugger-based diagnostic tool such as LeanSentry.

- CPU overload. If your server’s CPU is overloaded, it can cause request processing tasks to take a much longer time, impacting your response times. CPU overload is usually caused by CPU-intensive application code (e.g. LINQ query compilation, JSON serialization, regular expressions, logging, dependency injection, reflection, etc). But, in many modern web apps, it can also be caused by high concurrency overwhelming the processor’s ability to process tasks concurrently. This is becoming even more and more common with parallel tasks and async code.

- High GC. This is another tough one, where your application spends a large percentage of it’s processing time trying to clean up .NET objects. If your GC time is over 50% and higher, it’s going to cause poor performance.

Unfortunately, you won’t be able to tell which of these issues caused your hangs by monitoring the IIS logs. At the very least, you can now tell THAT you’ve had a hang. To identify the cause of the hang, you’ll need to analyze a snapshot of your worker process during the hang .

Or, if you have LeanSentry, you can get the issue code for any website-level issues directly in your IIS logs. This is courtesy of LeanSentry Hang diagnostics, which continuously monitors your application pools for hangs and auto-determines their causes. Head over to the Enhance your IIS logs guide to add this issue information to each slow request in your logs.

Automatically identify and diagnose IIS website hangs

Every IIS website will occasionally experience hangs. It’s just a fact of life. Resolving these hangs depends on being able to analyze the application’s state at the exact moment of the issue. LeanSentry Hang diagnostics were developed specifically to do this, and ended up being the most important part of our production diagnostic arsenal.

LeanSentry continuously monitors your IIS application pools for signs of hangs, in a way that requires no invasive profilers. If a hang is detected, LeanSentry occasionally performs a full diagnostic to identify the cause of the hang down to the app code.

If you are struggling with hangs, be sure to check out LeanSentry Hang diagnostics.

Diagnosing causes of slow requests

If you don’t have hangs, your slow requests are likely caused by long-running tasks in the application code. A hallmark of this is a steady, low percent of slow requests in the application URLs that have slow code.

These slowdowns can be caused by any number of things, slow SQL queries, large data sets belonging to different users or application entities, slow responses from web services, etc etc.

To diagnose these, and identify the code that needs to be optimized, you’ll need to use an APM tool or a just-in-time diagnostic tool like LeanSentry.

Alternatively, you can use part 2 of this guide: Enhance your IIS logs to enrich your IIS logs with long running operation tracking, so you can see what makes your requests slow directly in your IIS logs.

What is causing failed requests?

Here, IIS logs give you a bit more information with it’s status, substatus, and win32 status log fields.

Unfortunately, this information is limited to a point where it will often prevent you or your developers from identifying the error enough to fix it.

Error detail limitations of IIS logging

| Type of error | Example of error | Limitation |

| Http.sys errors | 503 Service Unavailable, 503 Queue Full, 400 Bad Request, more errors here. | Not logged to IIS logs.

Have to also check HTTPERR logs. |

| IIS error | 403.14 Forbidden, 500.19 Configuration error, more errors here. | Good enough!

The status, substatus, and win32 error code fairly accurately describe the issue. You can often look up the error explanation using this information, and coupled with the URL, it’s usually enough to fix the error. |

| ASP.NET application exception | Any exception in your ASP.NET application code. | Exception details missing. The error is logged to IIS log as a 500.0.No exception information is given, so a developer will likely not act on this error unless its easily reproducible via the url and querystring. You may be able to find exception details in the Application event log based on your health monitoring settings. |

| Classic ASP error | ASP_0145 New_Application_Failed, 800a0401 Expected_end_of_statement, or any other script error. | Good enough!

Classic ASP will log the error code, and line number, to the log so that you can identify the error pretty well. |

| PHP error | Any PHP script or framework error. | Exception details missing. Logged to IIS log as a 500.0. You’ll have to configure error logging to a PHP error log. |

The vast majority of errors experienced by production websites fall into the “application error” category. E.g. ASP.NET application exceptions, PHP errors, etc.

As a result, you can tell that you’ve had an error, but sadly your developers won’t have enough to go on. You can use an error monitoring product to complete the picture here, or manually correlate error details from other logs with the errors you see in the IIS log.

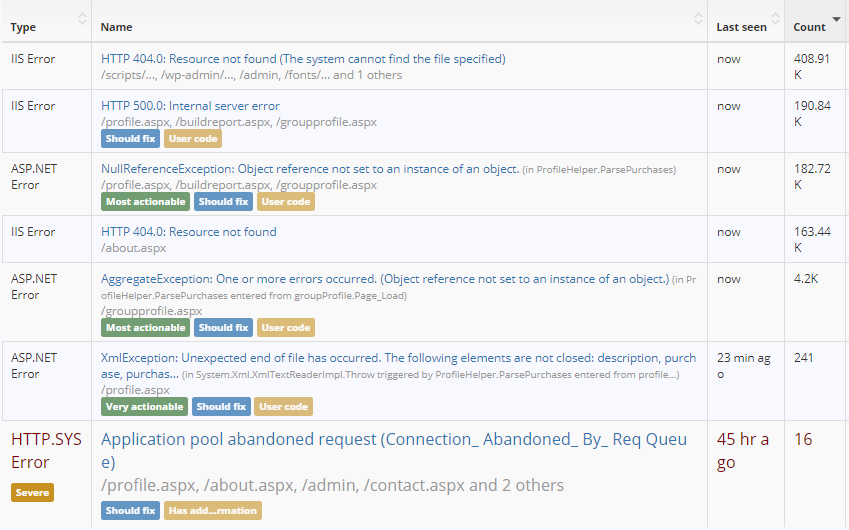

Tracking errors across the entire IIS/ASP.NET stack with LeanSentry

From the very beginning, I wanted LeanSentry to track errors across the entire stack. This includes HTTP.SYS errors, IIS errors, any application pool and worker process failures, as well as ASP.NET application exceptions. This makes sure no failures escape visibility, but also so that I can more easily group and prioritize different issues for fixing.

Since then, we’ve also added some really cool ways to make fixing errors more actionable.

This now also includes the ability to capture a debug dump of any .NET exceptions, which could otherwise be nearly impossible for a developer to reproduce.

If you are looking for a more actionable way to track and fix IIS errors, check out our getting started article Fix IIS, ASP.NET, ASP, and Http.sys errors.

That brings us to part 2 of this guide: enhancing your IIS logs with additional detail that you can use to fully identify errors causing your failed requests, even if you don’t have a full stack error monitor.

In part 2, we'll add the full error details to your IIS logs so your developers can actually fix the errors.

(This includes exception type, method, message, and even the line number if available.)

Enrich your IIS logs

Let’s take stock of what we’ve been able to do so far.

We’ve gone from “IIS log, what is it good for” to a simple, systematic process you can follow to measure your website’s quality of service, and break it down into issues:

- We’ve measured our website’s satisfaction score, and broken it down into specific URLs and how their slow requests/failed requests penalize our site’s service level.

(However: we are using a single SLA time for the entire site, and don’t have a good way to prioritize important pages/exclude unimportant pages from the calculation.)

- We’ve determined if we have hangs, or slow requests.

(However: we don’t know WHAT caused the hangs to investigate them further, was it cpu overload, thread pool exhaustion, or slow application code.)

- We’ve determined at a high level what kind of errors cause our failed requests.

( However: we don’t know the exact error details so our developers likely won’t look at the errors.)

This is a good starting point, but we need more detail to make the issues we identified in the logs actionable.

This is exactly what we’ll do in part 2 of this guide: Enhance your IIS logs.

Best of all, I’ll show you how to use our free, lightweight LeanSentry.ApplicationMonitoring.dll library to enhance your IIS logging without a single line of code.

Investigating performance issues?

If you are looking to find the answers to IIS performance issues: hangs, CPU overloads, memory leaks and the like, deploy LeanSentry to diagnose and fix those issues the right way. (You won't find those answers in the IIS logs)